用了个老的RHEL 4.X的机器,蛋疼无比。。bash不支持hash,自带的很多perl的库bug一堆。今天准备写个脚本又遇到个问题。自带的LWP库太老了,搞出段错误了。。

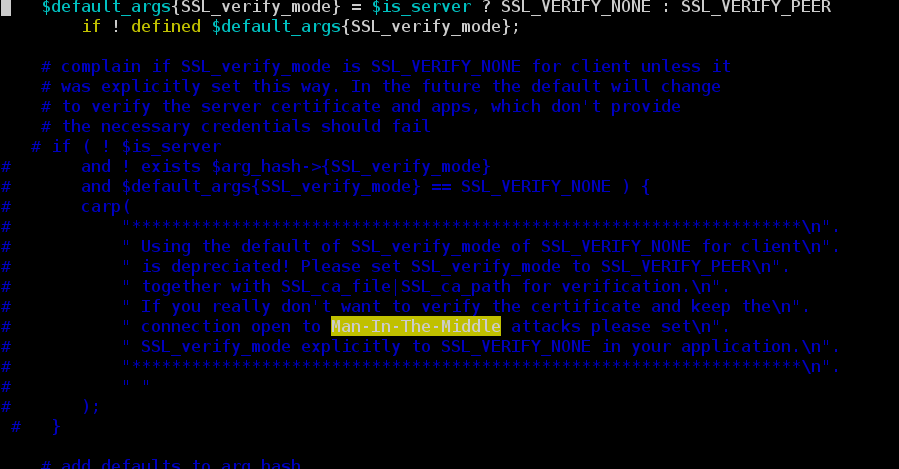

不过现在跑个脚本多会跑出一端报警,LWP Agent里面好像又没有参数可以传进去设置这个,而且以前的很多脚本还地改,然后我就直接把这段警告消息给注释了。实在不想折腾这个老东西。

/usr/lib/perl5/site_perl/5.8.5/IO/Socket/SSL.pm

用了个老的RHEL 4.X的机器,蛋疼无比。。bash不支持hash,自带的很多perl的库bug一堆。今天准备写个脚本又遇到个问题。自带的LWP库太老了,搞出段错误了。。

不过现在跑个脚本多会跑出一端报警,LWP Agent里面好像又没有参数可以传进去设置这个,而且以前的很多脚本还地改,然后我就直接把这段警告消息给注释了。实在不想折腾这个老东西。

/usr/lib/perl5/site_perl/5.8.5/IO/Socket/SSL.pm

很久很久以前,nginx的upstream还不支持HTTP 1.1的时候,nginx做反响代理时只能使用短链接。设想以下的结构中

CLient–http 1.1 keepalive —>Nginx(proxy)—http 1.1 connection: close–>APP

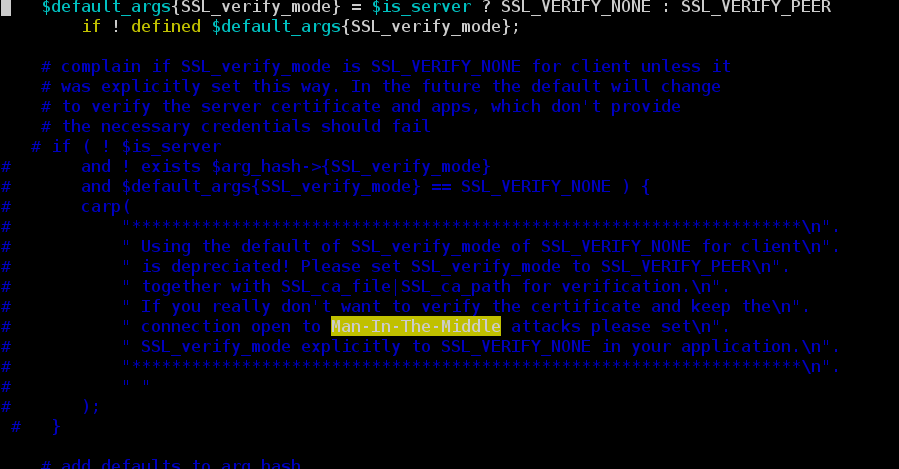

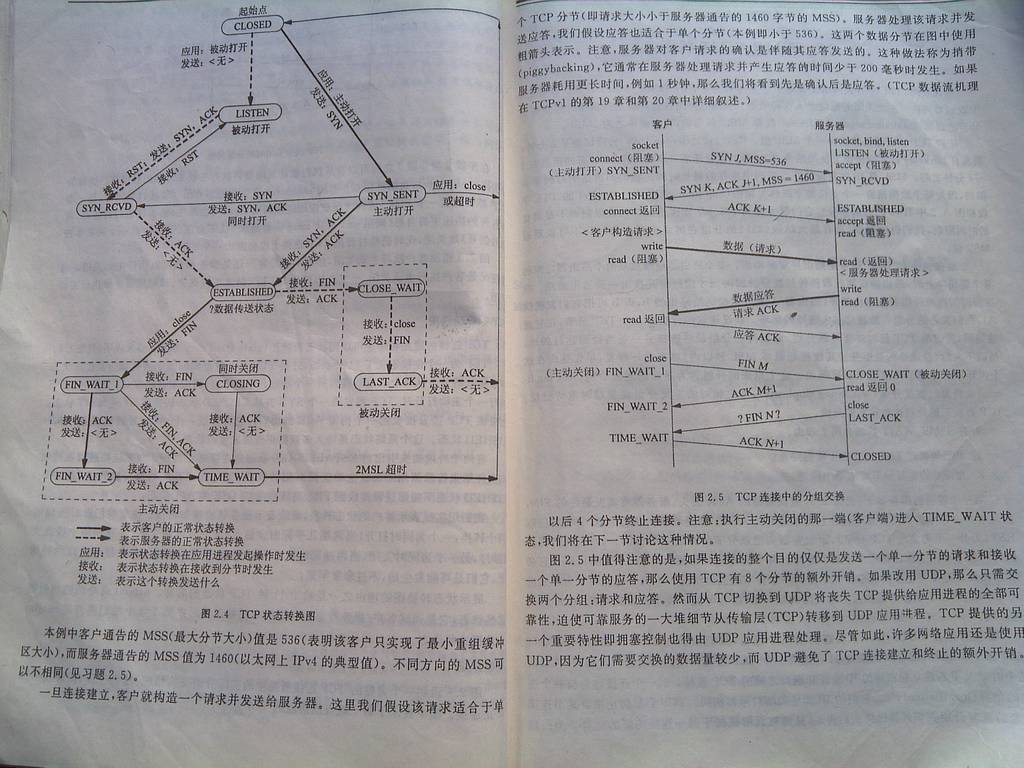

经常会看到后端APP上有大量的TIME_WAIT状态的链接。这几天又重新读了一下TCP详解和UNP。状态变迁图如下

根据上图如果是客户端先发FIN包主动关闭,服务端其实是不会进入TIME_WAIT状态的

如果是客户端和服务端同时关闭的话,客户端和服务端都会出现TIME_WAIT的状态

apache设置keepalive Off的时候每次都会主动关闭掉,所以TIME_wait的数量非常多。

主要不是客户端完全主动关闭,那么服务端都是会出现这个TIME_WAIT的。

针对apache的keepalive on和off都单独抓包了。

Off

以上2图中的客户端都是10.253.85.156

可以清楚的看到当时keepalive设置为on的时候都是客户端先发FIN包,而设置为Off的时候都是服务器端先发FIN包去关闭。

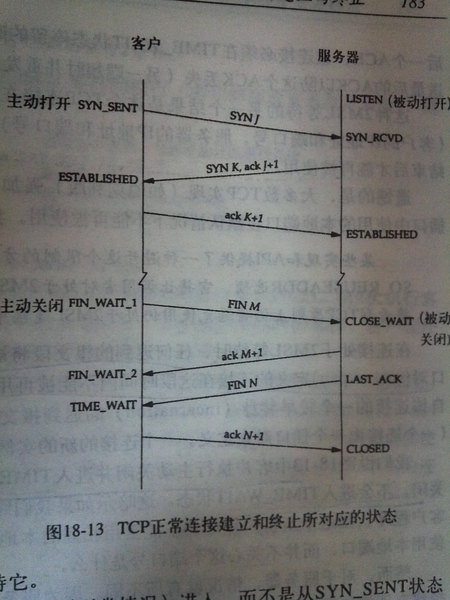

在TCP/IP详解第三卷其实也有说明

今天又发现线上的机器有很多CLOSE_WAIT状态的链接,接近1W个。。

又反复看了下TCP/IP详解的内容。基本定位为本地发起链接去连接远程的8080端口, 远程的服务端在完成了一个调用后主动发起链接关闭发了一个FIN包给本地服务器,然后就没有下文了,就产生了CLOSE_WAIT。。

正常情况下本地服务器需要回一个ACK后再向对远程服务器发一个FIN包,再等到对方回复ACK后才能CLOSE。

为了证实我的猜测我也到这里的“远程服务器“去查看了一下,发现很多链接处于FIN_WAIT2的状态。对于FIN_WAIT2的链接默认会在60s后进行回收,加入特别多的话可以降低tcp_fin_timeout。其实产生CLOSE_WAIT的原因也就是自己没有去调用close来关闭连接。

比如

connfd = accept(listenfd,xxx,xx)后

对这个connfd进行操作完就直接不管了,而对方在完成操作后自己调用了close关闭了链接,这个时候自己就一直处于CLOSE_WAIT的状态,网上有很多说法说这个状态2小时后会被回收,但是实际测试linux根本不会回收的。

叔度的这个blog也提到了类似的问题,这种情况基本都是软件有bug。。

使用一个nginx做全局的代理负载均衡集群时遇到了一点问题。主要是前面的nginx集群机器很多(M个),每个nginx机器的进程也很多(N个),然后设置的keepalive数量和upstream里的机器数量相同。

这个时候如果后端机器的连接数限制比较小就杯具了,平时每个后端机器的长链接数差不多是M*N +XX ,因此链接的利用率(XX)/(M*N+XX) 。当前面的机器和单机进程数很多的时候链接的利用率就会非常低。造成健康检测时后端的机器检查失败,直接被踢掉了,引发雪崩效应。

这个时候如果后端的应用不改,那么唯一的2种方案:

1、改成短链接。可能整体的响应时间有所增加。

2、适当降低keepalive的值,但是降低的太多会使得和短链接差不多的效果。很难定量的衡量这个值的最佳性,只能不断测试。

其实在apache的mod_jk模块里也是统一存在这样的问题

[text]

Timeout 180

KeepAlive On

MaxKeepAliveRequests 1000

KeepAliveTimeout 360

<IfModule worker.c>

StartServers 10

ServerLimit 50

MaxClients 1500

MinSpareThreads 50

MaxSpareThreads 200

ThreadsPerChild 50

MaxRequestsPerChild 10000

</IfModule>

worker.local.type=ajp13

worker.local.host=localhost

worker.local.port=7001

worker.local.lbfactor=50 # 多个woker的时候这个的权重

worker.local.cachesize=100 #每个进程的连接池大小,最好和apache里每个进程的最大线程数一致

worker.local.cache_timeout=600

worker.local.socket_keepalive=1 #是否开keepalive

worker.local.recycle_timeout=300 #空闲连接的回收时间

参考 http://tomcat.apache.org/connectors-doc/reference/workers.html

[/text]

如果前面的很容易造成的情况是apache的jk把后端tomcat的链接数(400)撑爆了,但是实际上很多链接又闲着。主要是每个进程的信息是独立的,

每个进程最多能有100个连接,整体的量就很难控制,尤其是上面这个空闲连接的回收时间设置的不合理。实际测试就是把这个回收的时间缩短,改成1,然后把cachesize降低到50 效果就好了很多。

今天主要是还原一个线上的问题,自己搞了几个机器配置了一下nginx模拟做代理时后端服务器速度跟不上的问题。基本的结构如下

client —->nginx(proxy)—nginx server(limit rps 400/s)

因为我总的就3个机器,所以后端的nginx server里面配置的是

limit_conn_zone $binary_remote_addr zone=connzone:10m;

limit_req_zone $binary_remote_addr zone=reqzone:10m rate=400r/s;

server {

listen 1081;

server_name localhost;

keepalive_requests 1000;

location / {

root /var/www/httpdtest;

index index.html index.htm;

limit_req zone=reqzone burst=50; #限制单个IP的 RPS不超过 400,允许有多余的50个处于等待状态

limit_conn connzone 400; #限制单个IP的并发数不超过400

limit_req_log_level info;

limit_conn_log_level info;

}

location /status {

stub_status on;

access_log off;

}

简单的对比测试了一下后端限速和不限速的情况。

当后端不限速的时候压测时代理proxy 的load非常高,单个核的机器CPU使用率能到100%,usr,sys,soft各占了30%左右,但是后端服务器的load比较低,只有20%左右。

当后端机器限速的时候proxy上会出现大量的503,并且load还是很高,基本和前面的相同,但是后端的服务器load也会比较高,在30%–80%间波动。

检查后端服务器的日志可以发现很多健康检测的请求被置为了503,也就是存在服务器被踢掉的情况。

另外顺便测试了一下apache的配置,之前的woker的配置

[text]

Timeout 180

KeepAlive On

MaxKeepAliveRequests 100

KeepAliveTimeout 360

<IfModule worker.c>

StartServers 10

ServerLimit 50

MaxClients 1500

MinSpareThreads 50

MaxSpareThreads 200

ThreadsPerChild 50

MaxRequestsPerChild 10000

</IfModule>

[/text]

这个其实一看会觉得很多不合理的配置。之前测的结果是RPS开keepalive只能到1700/s,不开的keepalive只有1500-1600/s。

后来尝试把并发数量调整的比较大后测试了一下性能测增加很有限,也就到1800/s左右,基本没有啥变化。

由于代理服务器上开启了长连接后,可以减少每次与后端服务器的三次握手,提高后端服务器的效率。所以nginx在1.1.4版本中终于增加了upstream对keepalive的支持,而haproxy原本对后端是支持长连接的(但是这个时候由于不能把client的IP加到header转发给后端服务器造成会丢失客户端的IP)。今天简单测试了一下。基本的结构是

A:

Client –> nginx(proxy) –>nginx(server)

B:

Client –> haproxy(proxy) –>nginx(server)

对比的结构

Client –>nginx(server)

先测试一下client直接和server端能否保持住长连接,我是直接在server端抓包观察,确定OK后再进行nginx和haproxy的测试。

nginx proxy的配置如下:

[text]

server {

location / {

root html;

proxy_set_header Connection "";

proxy_http_version 1.1;

proxy_intercept_errors on;

proxy_set_header Host $http_host;

proxy_set_header ORIG_CLIENT_IP $remote_addr;

index index.html,index.htm;

proxy_pass http://httpd;

}

}

upstream httpd {

server 172.189.85.156:1080;

keepalive 4;

least_conn;

}

[/text]

在server端tcpflow抓包后可以看到

[text]

0172.189.085.161.50970-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50970: HTTP/1.1 304 Not Modified

0172.189.085.161.50971-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50971: HTTP/1.1 404 Not Found

0172.189.085.161.50972-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50972: HTTP/1.1 304 Not Modified

0172.189.085.161.50973-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50973: HTTP/1.1 404 Not Found

0172.189.085.161.50974-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50974: HTTP/1.1 304 Not Modified

0172.189.085.161.50975-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50975: HTTP/1.1 404 Not Found

0172.189.085.161.50976-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50976: HTTP/1.1 304 Not Modified

0172.189.085.161.50977-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50977: HTTP/1.1 404 Not Found

0172.189.085.161.50978-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50978: HTTP/1.1 304 Not Modified

0172.189.085.161.50979-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50979: HTTP/1.1 404 Not Found

0172.189.085.161.50980-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50980: HTTP/1.1 304 Not Modified

0172.189.085.161.50981-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50981: HTTP/1.1 404 Not Found

0172.189.085.161.50982-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50982: HTTP/1.1 304 Not Modified

0172.189.085.161.50983-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50983: HTTP/1.1 404 Not Found

0172.189.085.161.50984-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50984: HTTP/1.1 304 Not Modified

0172.189.085.161.50985-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50985: HTTP/1.1 404 Not Found

0172.189.085.161.50986-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50986: HTTP/1.1 304 Not Modified

0172.189.085.161.50987-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50987: HTTP/1.1 404 Not Found

0172.189.085.161.50988-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50988: HTTP/1.1 304 Not Modified

0172.189.085.161.50989-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50989: HTTP/1.1 404 Not Found

0172.189.085.161.50990-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50990: HTTP/1.1 304 Not Modified

0172.189.085.161.50991-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50991: HTTP/1.1 404 Not Found

0172.189.085.161.50992-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50992: HTTP/1.1 304 Not Modified

0172.189.085.161.50993-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50993: HTTP/1.1 404 Not Found

0172.189.085.161.50994-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50994: HTTP/1.1 304 Not Modified

0172.189.085.161.50995-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50995: HTTP/1.1 404 Not Found

0172.189.085.161.50996-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50996: HTTP/1.1 304 Not Modified

0172.189.085.161.50997-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50997: HTTP/1.1 404 Not Found

[/text]

从上面可以看到代理服务器每次连接后端服务器使用的端口都是不同的,这个时候我就郁闷了,难道nginx对后端开启不了长连接?

测试了一两个小时我终于想到是不是加了最小连接数的那个算法导致的。果然把那行去掉后就OK了。

[最新的状况是 把 keepalive 4;设置放在least_conn之后就可以了 ]

[text]

server {

location / {

root html;

proxy_set_header Connection "";

proxy_http_version 1.1;

proxy_intercept_errors on;

proxy_set_header Host $http_host;

proxy_set_header ORIG_CLIENT_IP $remote_addr;

index index.html,index.htm;

proxy_pass http://httpd;

}

}

upstream httpd {

server 172.189.85.156:1080;

keepalive 4;

}

[/text]

[text]

0172.189.085.161.50998-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 304 Not Modified

0172.189.085.161.50998-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 404 Not Found

0172.189.085.161.50998-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 304 Not Modified

0172.189.085.161.50998-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 404 Not Found

0172.189.085.161.50998-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 304 Not Modified

0172.189.085.161.50998-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 404 Not Found

0172.189.085.161.50998-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 304 Not Modified

0172.189.085.161.50998-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 404 Not Found

0172.189.085.161.50998-0172.189.085.156.01080: GET /index.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.50998: HTTP/1.1 304 Not Modified

[/text]

然后我又测试了一下haproxy。主要是之前对haproxy的理解有点误区。一方面doc里面说了

A last improvement in the communications is the pipelining mode. It still uses

keep-alive, but the client does not wait for the first response to send the

second request. This is useful for fetching large number of images composing a

page :

[CON] [REQ1] [REQ2] … [RESP1] [RESP2] [CLO] …

This can obviously have a tremendous benefit on performance because the network

latency is eliminated between subsequent requests. Many HTTP agents do not

correctly support pipelining since there is no way to associate a response with

the corresponding request in HTTP. For this reason, it is mandatory for the

server to reply in the exact same order as the requests were received.

By default HAProxy operates in a tunnel-like mode with regards to persistent

connections: for each connection it processes the first request and forwards

everything else (including additional requests) to selected server. Once

established, the connection is persisted both on the client and server

sides. Use “option http-server-close” to preserve client persistent connections

while handling every incoming request individually, dispatching them one after

another to servers, in HTTP close mode. Use “option httpclose” to switch both

sides to HTTP close mode. “option forceclose” and “option

http-pretend-keepalive” help working around servers misbehaving in HTTP close

mode.

另外一方面就是大家都知道haproxy对后端是没有keepalive的,目前连测试版也不支持。。。这个就让我觉得很是疑惑。

今天其实主要是为了测试一下haproxy,配置文件如下

frontend httpf

bind *:1689

default_backend httpd

backend httpd

mode http

option forwardfor header ORIG_CLIENT_IP

balance roundrobin

server ser2 172.189.85.156:1080

frontend httpf2

bind *:1690

default_backend httpd2

backend httpd2

mode http

option httpclose

balance roundrobin

option forwardfor header ORIG_CLIENT_IP

server ser2 172.189.85.156:1080

不过抓包后直接看到了当访问1689端口的时候对后端服务器的连接是一直保持的,但是显示不出客户端的IP(没有转发ORIG_CLIENT_IP),

[text]

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 304 Not Modified

0172.189.085.161.51026-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 404 Not Found

0172.189.085.161.51026-0172.189.085.156.01080: GET /index2.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 304 Not Modified

0172.189.085.161.51026-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 404 Not Found

0172.189.085.161.51026-0172.189.085.156.01080: GET /index2.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 304 Not Modified

0172.189.085.161.51026-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 404 Not Found

0172.189.085.161.51026-0172.189.085.156.01080: GET /index2.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 304 Not Modified

0172.189.085.161.51026-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 404 Not Found

0172.189.085.161.51026-0172.189.085.156.01080: GET /index2.html HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 304 Not Modified

0172.189.085.161.51026-0172.189.085.156.01080: GET /favicon.ico HTTP/1.1

0172.189.085.156.01080-0172.189.085.161.51026: HTTP/1.1 404 Not Found

[/text]

访问1690端口,即是配置了httpclose的时候发现能显示客户端的IP,但是每个连接都在请求完成后就关闭了,抓包的记录就不贴了。

看来有问题还是需要自己抓包来实际分析才知道究竟是怎么个情况。

后记:

今天统计了一下做代理的nginx似乎每个链接对后端请求处理100个后就断掉了,仔细查了一下wiki发现这个实际上是后端nginx的默认设置的问题。

可以吧后端的nginx的配置改成keepalive_requests 1000,这样代理服务器向后端的连接能每个处理1000个再中断。

在淘宝上买了个而20来块钱包邮的USB 声卡,芯片是CM108的(CM108比CM109好一点的)。之前一直以为没有驱动起来搞的还单独安装了CM109的驱动,后来发现DB120是只有下面的那个USB接口能识别声卡,上面的那个虽然能接U盘,但是不能挂一些设备(比如U盾)。

需要安装的软件其实也不多

#安装 USB驱动,文件系统模块,和自动挂载工具

opkg install kmod-usb2 kmod-nls-utf8 kmod-usb-storage kmod-usb-ohci block-mount kmod-fs-ext4

#安装声卡驱动 和alsa工具

opkg install alsa-utils kmod-usb-audio

#安装播放器

opkg install madplay

需要注意声卡默认音量是最大声,Openwrt下保存不了配置文件,我就在rc.local里加了一句/usr/sbin/alsactl init

把音乐拷到U盘,然后就能播放了

madplay -v –tty-control -r /mnt/usb/music/*.mp3

今天突然想起把自己之前写的一个脚本换成多线程的模式改写一下,因为之前的模式很多时间都阻塞住了,每次批量搞几千个机器太费时。

先是使用老的Thread模块把脚本改写的一遍,都把脚本写好了验证OK才发现这个是老的模块,不推荐使用,肺都气炸了。还好新的threads模块也类似,简单修改了一下也OK,而且新模块的功能强大很多,可靠性也好。测试一下

#!/usr/bin/perl

use strict;

use warnings;

use threads;

my @test=(1...988);

srand();

&muti_work;

sub muti_work(){

my $JOBN=30;

my $jobs=0;

while(@test){

if($jobs<$JOBN && $jobs>=0) {

my $pid=threads->create(\&echon,shift @test);

$jobs++;

} elsif($jobs>=$JOBN) {

my @actlist=threads->list(threads::joinable);

foreach my $t (@actlist) {

$t->join;

$jobs--;

}

sleep 0.1;

}

}

while($jobs>0) {

my @actlist=threads->list(threads::joinable);

foreach my $t(@actlist){

$t->join;

$jobs--;

}

sleep 0.5;

}

}

sub echon(){

my $num=shift;

my $t=int(rand(8));

sleep $t;

my $thr = threads->self();

print "i am working $num sleep $t\n";

threads->exit(0);

}

print "ok\n";

如果涉及到每个线程都有一个返回值的话,可以在函数里面直接运行return就行。使用my $vat=$t->join的形式进行返回值的获取。如果返回的是一个数组,也是可以直接return @list的,但是thread->create的时候需要加参数。详细的看cpan的文档。

my $pid=threads->create({'context' => 'list'},$rfun,@para);

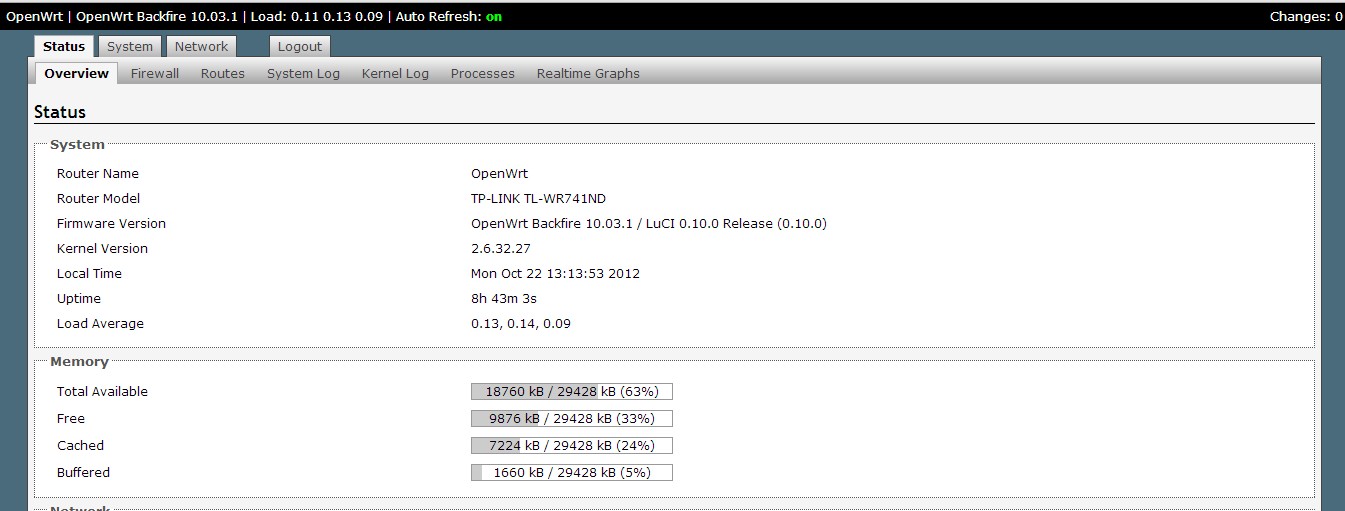

因为DB120的无线速度确实比较慢,所以本来是打算买个FW300R V2或者TP-link 841n v[3-7]来刷OpenWrt的。结果后来在淘宝上找到了比较便宜的740n V4,算上电源才39块钱一个。就直接拍了一个。740n的ar7240的频率被限制在350Mhz,所以就采用了曲线刷机的方式,然后恰好我下的带改了400Mhz的uboot的740n的固件头校验不过,然后恰好里面带的一个dd-wrt的固件是能直接刷的。所以就

740n –> dd-wrt –>741n –>741n(400Mhz uboot)–>OpenWrt

dd-wrt下刷741n的估固件这样做

然后就dd-wrt刷会下的固件,cat /proc/mtd确认分区名称

mtd -r write 741.bin linux

具体的过程不细说了,需要用到的中转固件是这里下的。

公司发的3G卡有大半年没有用过了,主要是平时很少外出。今天测试了一下再debian下进行拨号配置,其实还是比较简单的。

主要参考了网上现有的文章。 只需要安装 wvdial, usb-modeswitch就可以了。

然后进行一下简单的配置,以下是我使用的配置文件

# cat wvdial.conf

[Dialer Defaults]

Init = AT+CGDCONT=1,”IP”,”uninet”

Init1 = ATZ

Init2 = ATQ0 V1 E1 S0=0 &C1 &D2 +FCLASS=0

Init6 = AT+CFUN =1

Modem Type = Analog Modem

Baud = 115200

New PPPD = yes

Modem = /dev/ttyUSB2

ISDN = 0

Phone = *99#

Username = any

Auto DNS = 0

Password = any

New PPPD = yes

Idle Seconds = 300

Stupid Mode = 1

其实每一项的含义都可以man wvdial.conf查看的,比如Stupid Mode就是

Stupid Mode

When wvdial is in Stupid Mode, it does not attempt to interpret any prompts from the terminal server.

It starts pppd immediately after the modem connects. Apparently there are ISP’s that actually give you

a login prompt, but work only if you start PPP, rather than logging in. Go figure. Stupid Mode is

(naturally) disabled by default.

之前我没有添加这个配置,启动wvdial后就会处于等待的状态,配置这个选项后启动就立即进行拨号了。